AI: The most important buzzword for tech industries all over the globe.

Naturally, manufacturers of innovative AI core chips aren’t going to miss this chance to bring about a major reshuffling through such an important technology. Intel, NVIDIA, Qualcomm and other chip giants have already lined their troops on the AI field. FPGA colossus Xilinx has also joined the battleground. With a vengeance.

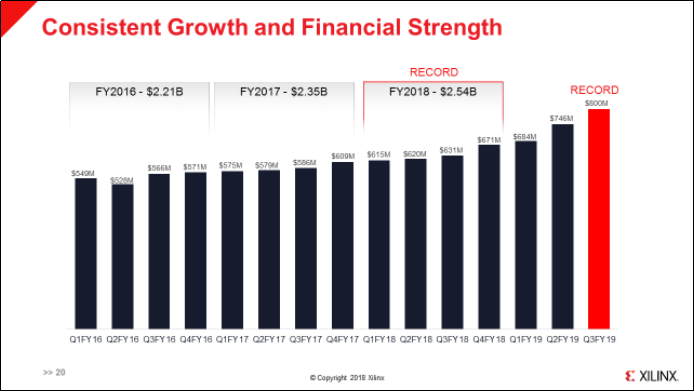

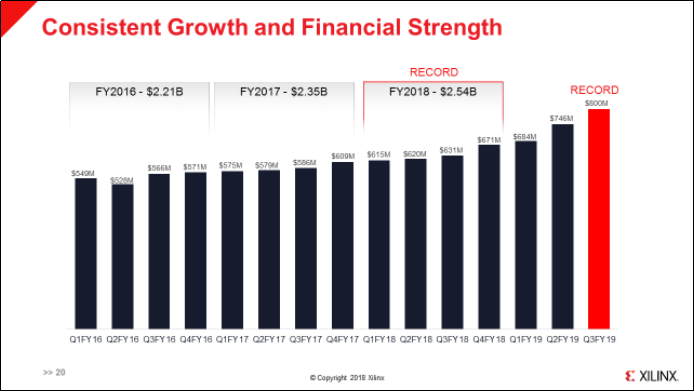

It was January 23, 2019, U.S. time. Xilinx, the age-old American chip giant, announced its financial statements for the 3rd quarter of the 2019 fiscal year, accounting for the period up to December 29, 2018: a US$800 million revenue in a single quarter. This marked Xilinx’s 13th consecutive quarter of YoY revenue growth, with Xilinx’s stock price standing at US$106 per share. Up until the end of 2016, Xilinx’s stock price had oscillated between US$40 and 50 per share. It has now surpassed US$130 as of today.

(Source:Xilinx)

Xilinx is expected to be the next NVIDIA, and is an important 7 nm customer and companion to TSMC alongside Apple, NVIDIA, AMD and other big boys. How will Xilinx embrace its newfound position?

Why FPGA Will Emerge from the AI Wave

Tech savvy folk’s first impression of Xilinx will have to be FPGA (Field Programmable Gate Array) products. Xilinx and Intel-acquired Altera stand side by side as the two reigning champions in FPGAs worldwide. The key to Xilinx’s emergence in this AI wave lies too in FPGAs.

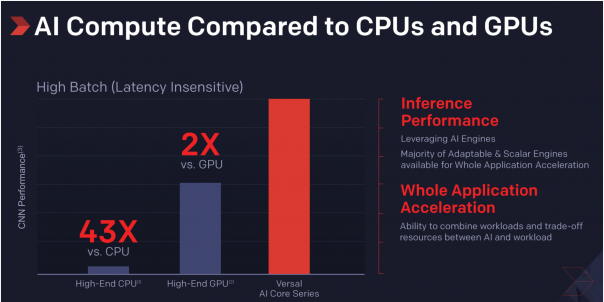

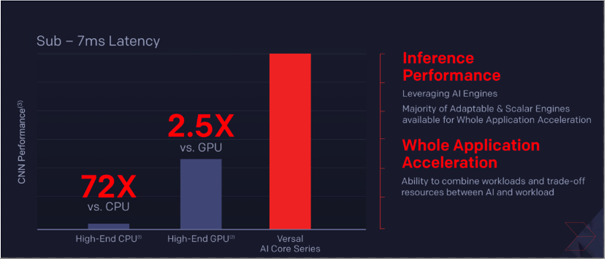

(Source:Xilinx)

(Source:Xilinx)

FPGAs are inherently built with parallel computing and high configuration flexibility. They enjoy a wide variety of uses in many different vertical application markets, such as measurement, communications infrastructure, ADASs (advanced driver assistance systems), datacenter accelerators and aerospace and defense. FPGA units even played an important part in early mass production of end products back in the day. They were often replaced by ASICs or ASSPs only after gradual upscaling of mass production.

FPGAs were not without its weaknesses, however: Early development was relatively demanding, since developers had a hard time migrating C and other common programming languages to FPGAs. Consequently, FPGAs were not as ubiquitous as CPUs and GPUs.

However, thanks to the endless efforts made by Xilinx over the years to improve development tools and the integration of ARM CPUs, the group of potential FPGA users has further expanded, since this gave most ARM engineers access to Xilinx products, and FPGAs a sizable increase in exposure.

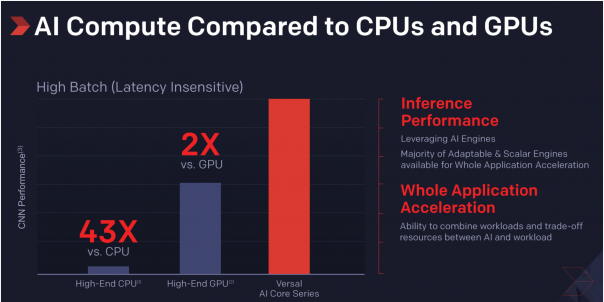

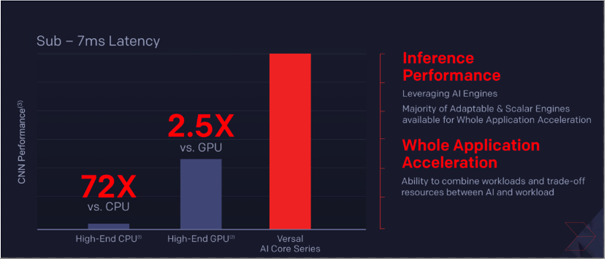

Xilinx, equipped with FPGAs, fought with NVIDIA and Intel for AI accelerator (mainly divided into model training and inference) market shares. Although Intel, NVIDIA and other businesses have held an oligopoly in model training accelerators since 2017 until the present day, there have been no new chip releases as of late, yet numerous developments have been made in their inference counterparts. This shouldn’t come as a surprise—there is no shortage of business opportunities in AI inference.

Fast, Furious and Flaunting Better Specs

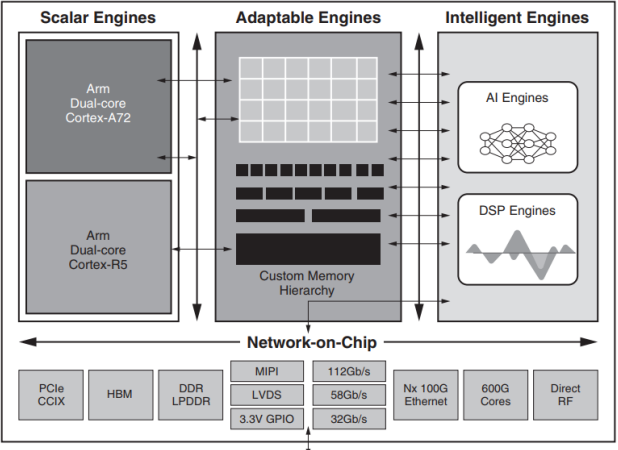

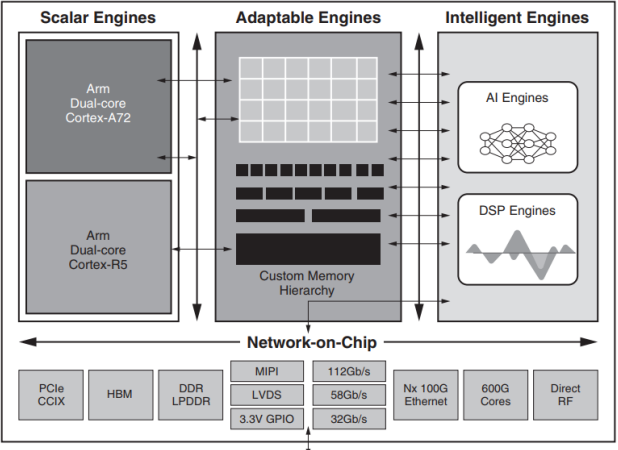

GPUs, FPGAs, ASICs and neuromorphic chips all comprise the architecture for AI accelerators: the GPU camp includes NVIDIA, while the FPGA camp includes Intel Altera and Xilinx. Intel Altera and Xilinx have been releasing details about their new products for the last half-year, yet Altera is still sticking to quad-core Cortex A53s, as opposed to the dual-core A72 design in Xilinx’s ACAP (Adaptive Compute Acceleration Platform) series. Xilinx further slapped AI and DSP engines onto its ACAPs to meet the demands of different workloads. Thus by comparison, the latter is clearly the more promising of the two.

▲Xilinx released its ACAP architecture blueprint (Source:Xilinx)

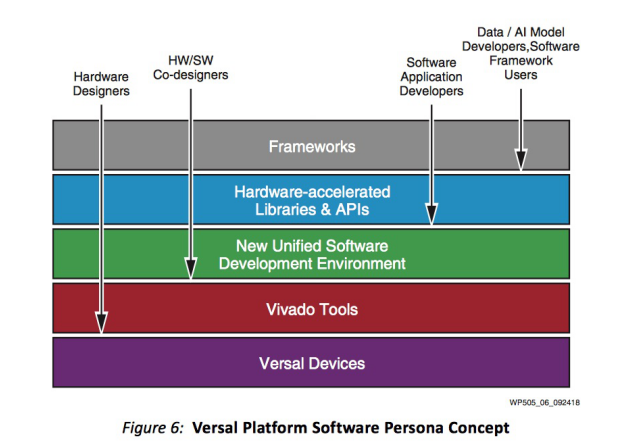

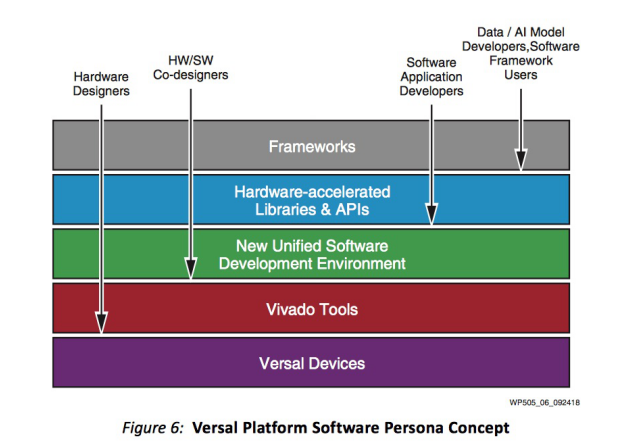

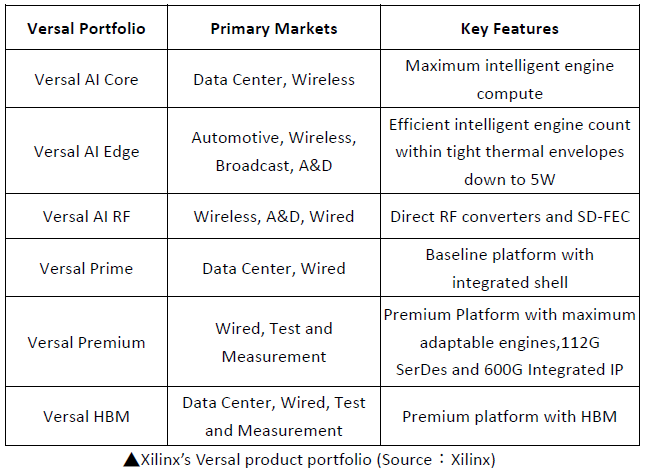

What’s more, Xilinx isn’t sleeping on development tools. The company is quite invested in cranking up the development speed of its developers to meet the demands of a rapidly shifting market. With Versal components and Vivado programming tools at its core, ACAP joins hardware and software programmers together, and accommodates developers in software applications and AI models with its libraries and APIs, satisfying their development demands.

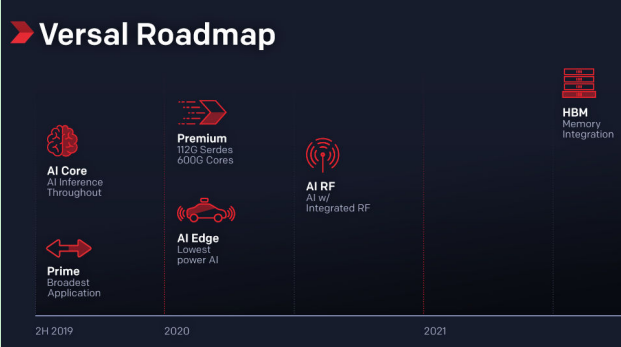

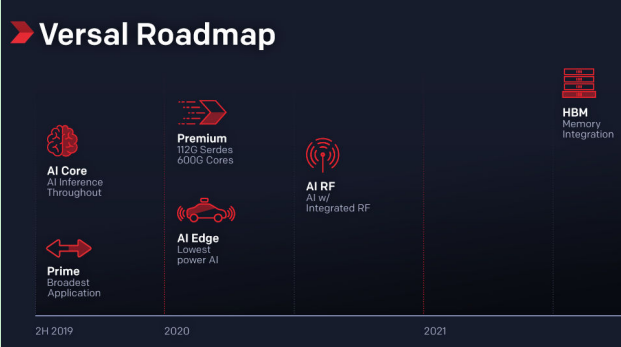

Another of Xilinx’s niche lies in its partnership with capacity-wise competent 7nm provider TSMC, which will ensure successful rollouts of AI Core and Prime ACAPs by the second half of 2019. Meanwhile, Intel’s murky outlook for 10nm production capacity still looms over the possibility of FPGA products arriving in customers’ hands. All these bode well for Xilinx: its ACAPs are likely to gain the upper hand in the market.

Versal Platform Software Persona Concept (Source:Xilinx)

As for FPGA application in data centers, October last year saw Xilinx’s release of the inference accelerator that datacenters need, and the product that allowed Xilinx to join hands with China internet giants Baidu and Inspur: Alveo. The enduring climate of business model innovation by cloud service providers (CSPs) will naturally give rise to a variety of workloads. Conjoined with the fact that FPGAs inherently possess high implementation flexibility, FPGA-based accelerator cards are naturally well-suited to provide datacenters with a multitude of configuration options corresponding to different workloads and different scenarios.

On the whole, although Xilinx stuck around with 16 nm processes for quite a while, it spent this time developing different product lines to fit the needs of a myriad of markets, including automotive vehicles, telecommunications, industrial automation, measurement and aerospace and defense; it further went on to target datacenters with the release of inference accelerators. Two roads diverged in the vast AI market, yet Xilinx carved a path of its own: FPGAs, which now lay alongside CPUs and GPUs.

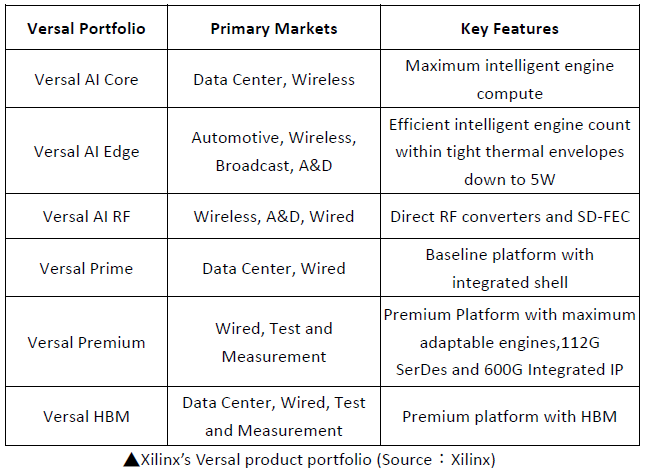

▲Xilinx’s next generation Versal product roadmap (Source:Xilinx)